目录

- 一、前言

- 二、方案一:AVCaptureSession + AVCaptureMovieFileOutput

- 1.创建AVCaptureSession

- 2.设置音频、视频输入

- 3.设置文件输出源

- 4.添加视频预览层

- 5. 开始采集

- 6. 开始录制

- 7.停止录制

- 8.停止采集

- 三、方案二:AVCaptureSession + AVAssetWriter

一、前言

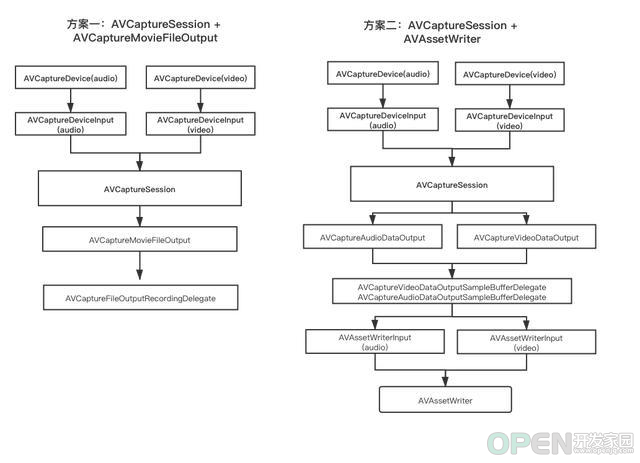

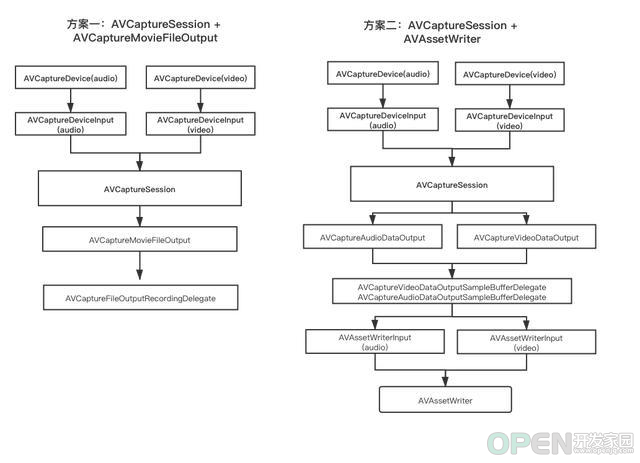

AVCaptureSession 是 AVFoundation 的核心类,用于管理捕获对象 AVCaptureInput 的视频和音频的输入,协调捕获的输出 AVCaptureOutput。

AVCaptureOutput 的输出有两种方法:

- 一种是直接以 movieFileUrl 方式输出;

- 一种是以原始数据流 data 的方式输出

流程对比图如下:

下面详细讲解两种录制视频的方案:

二、方案一:AVCaptureSession + AVCaptureMovieFileOutput

1.创建AVCaptureSession//导入 AVFoundation.framework

#import <AVFoundation/AVFoundation.h>

//声明属性

@property (nonatomic, strong) AVCaptureSession *captureSession;

//懒加载 AVCapturesession

- (AVCaptureSession *)captureSession {

if (!_captureSession) {

_captureSession = [[AVCaptureSession alloc] init];

//设置分辨率

if ([_captureSession canSetSessionPreset:AVCaptureSessionPresetHigh]) {

[_captureSession setSessionPreset:AVCaptureSessionPresetHigh];

}

}

return _captureSession;

}

注意:AVCaptureSession 的调用是会阻塞线程的,建议单独开辟子线程处理。

2.设置音频、视频输入//声明属性

@property (nonatomic, strong) AVCaptureDeviceInput *videoInput;

@property (nonatomic, strong) AVCaptureDeviceInput *audioInput;

//设置视频,音频输入源

- (void)setCaptureDeviceInput {

//1. 视频输入源

//获取视频输入设备, 默认后置摄像头

AVCaptureDevice *videoCaptureDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeVideo];

NSError *error = nil;

self.videoInput = [AVCaptureDeviceInput deviceInputWithDevice:videoCaptureDevice error:&error];

if ([self.captureSession canAddInput:self.videoInput]) {

[self.captureSession addInput:self.videoInput];

}

//2. 音频输入源

AVCaptureDevice *audioCaptureDevice = [[AVCaptureDevice devicesWithMediaType:AVMediaTypeAudio] firstObject];

self.audioInput = [AVCaptureDeviceInput deviceInputWithDevice:audioCaptureDevice error:&error];

if ([self.captureSession canAddInput:self.audioInput]) {

[self.captureSession addInput:self.audioInput];

}

}

3.设置文件输出源//声明属性

@property (nonatomic, strong) AVCaptureMovieFileOutput *movieFileOutput;

@property (nonatomic, strong) AVCaptureVideoPreviewLayer *previewLayer;

//设置文件输出源

- (void)setDeviceFileOutput {

//初始化文件输出对象

self.movieFileOutput = [[AVCaptureMovieFileOutput alloc] init];

//捕获会话中特定捕获输入对象和捕获输出对象之间的连接

AVCaptureConnection *captureConnection = [self.movieFileOutput connectionWithMediaType:AVMediaTypeVideo];

//设置防抖

if ([captureConnection isVideoStabilizationSupported]) {

captureConnection.preferredVideoStabilizationMode = AVCaptureVideoStabilizationModeAuto;

}

//预览图层和视频方向保持一致

captureConnection.videoOrientation = [self.previewLayer connection].videoOrientation;

//添加文件输出源

if ([self.captureSession canAddOutput:self.movieFileOutput]) {

[self.captureSession addOutput:self.movieFileOutput];

}

}

4.添加视频预览层- (void)setVideoPreviewLayer {

self.previewLayer.frame = [UIScreen mainScreen].bounds;

[self.superView.layer addSubLayer:self.previewLayer];

}

- (AVCaptureVideoPreviewLayer *)previewLayer {

if (!_previewLayer) {

_previewLayer = [AVCaptureVideoPreviewLayer layerWithSession:self.captureSession];

_previewLayer.masksToBounds = YES;

_previewLayer.videoGravity = AVLayerVideoGravityResizeAspectFill;//填充模式

}

return _previewLayer;

}

5. 开始采集//声明属性

@property (nonatomic, strong) dispatch_queue_t sessionQueue;

//开始采集

- (void)startCapture {

self.sessionQueue = dispatch_queue_create("com.capturesession.queue", DISPATCH_QUEUE_CONCURRENT);

if (![self.captureSession isRunning]) {

__weak __typeof(self) weakSelf = self;

dispatch_async(self.sessionQueue, ^{

[weakSelf.captureSession startRunning];

});

}

}

6. 开始录制//开始录制

- (void)startRecord {

[self.movieFileOutput startRecordingToOutputFileURL:[self createVideoPath] recordingDelegate:self];

}

当实际的录制开始或停止时,系统会有代理回调。当开始录制之后,这时可能还没有真正写入,真正开始写入会回调下面代理,停止录制也是如此,所以如果你需要对录制视频起始点操作,建议通过系统的回调代理://实现协议 <AVCaptureFileOutputRecordingDelegate>中的方法

#pragma mark _ AVCaptureFileOutputRecordingDelegate

//起始点 - 开始录制

- (void)captureOutput:(AVCaptureFileOutput *)output didStartRecordingToOutputFileAtURL:(NSURL *)fileURL fromConnections:(NSArray<AVCaptureConnection *> *)connections {

}

//结束录制

-(void)captureOutput:(AVCaptureFileOutput *)captureOutput didFinishRecordingToOutputFileAtURL:(NSURL *)outputFileURL fromConnections:(NSArray *)connections error:(NSError *)error

{

NSLog(@"视频录制完成. 文件路径:%@",[outputFileURL absoluteString]);

}

7.停止录制//停止录制

- (void)stopRecord {

if ([self.movieFileOutput isRecording]) {

[self.movieFileOutput stopRecording];

}

}

8.停止采集//停止采集

- (void)stopCapture {

if ([self.captureSession isRunning]) {

__weak __typeof(self) weakSelf = self;

dispatch_async(self.sessionQueue, ^{

[weakSelf.captureSession stopRunning];

weakSelf.captureSession = nil;

});

}

}

三、方案二:AVCaptureSession + AVAssetWriter

方案二及更多内容,请访问: 基于AVFoundation实现视频录制的两种方式

本文来自博客园,作者:reyzhang,转载请注明原文链接:https://www.cnblogs.com/reyzhang/p/16646673.html

|

![]() 移动开发

发布于:2022-09-01 15:34

|

阅读数:324

|

评论:0

移动开发

发布于:2022-09-01 15:34

|

阅读数:324

|

评论:0

QQ好友和群

QQ好友和群 QQ空间

QQ空间